Engineering budgets are where SaaS spending gets real. Product managers might debate Slack vs Teams. But engineering teams need their tools to work, and they'll spend whatever it takes to make that happen.

We looked at which tools engineering teams are actually paying for, how much they're spending per team, and what the modern development stack looks like in 2026. The picture is complex, expensive, and revealing about how companies actually build software.

The Modern Engineering Stack Costs More Than You Think

A mid-market engineering team typically uses: version control (GitHub), issue tracking and CI/CD (Atlassian or GitHub), code quality (Sentry), observability (Datadog), IDE licenses (JetBrains), password management (1Password), and increasingly, AI-powered coding assistants (Cursor, Claude).

The total per-engineer spend looks like this: GitHub at $7,934 average per buyer, Atlassian at $23,608, Datadog at $30,809, Sentry at $3,424, JetBrains at $6,160, Cursor at $5,857, and 1Password at $4,955.

That's roughly $82,000 in annual tooling spend for a typical engineering organization. For a 50-person engineering team, that's $1,640 per engineer. For a 200-person team, economies of scale bring it down to $410 per engineer. The cost structure is real.

The GitHub vs Atlassian Question

GitHub sits at 56.5% adoption with $7,934 average spend. Atlassian (which includes Jira, Bitbucket, and Confluence) sits at 49% adoption with $23,608 average spend. This gap tells a story.

GitHub is the version control platform and becoming more. Its roadmap is moving into issue tracking, CI/CD, and even observability. For startups and smaller teams, GitHub is sufficient infrastructure. Use GitHub Issues instead of Jira. Use GitHub Actions instead of CircleCI. Keep everything in one place.

Atlassian is winning in enterprises and mid-market because it offers deeper customization, compliance controls, and integration breadth. GitHub is winning in velocity and simplicity. The $15,000+ gap reflects the difference between "good enough" and "enterprise-grade."

Interestingly, both are growing. GitHub is gaining adoption. Atlassian is not declining. This suggests the market is bifurcating: small/medium teams consolidate on GitHub. Large teams standardize on Atlassian. Few companies use both.

Observability Costs Are Exploding

Datadog is the most expensive tool in the engineering stack at $30,809 per buyer. This is not accidental.

Datadog is also not specialized. It's infrastructure observability (logs, metrics, traces). Many teams are adding dedicated tools for different layers: Sentry for error tracking ($3,424 average), specific APM tools, and synthetic monitoring vendors.

But Datadog keeps winning because it offers a unified platform. One contract, one dashboard, one user model for logs, metrics, and traces. The alternative is using four different vendors and integrating their APIs. Datadog's high spend reflects that consolidation benefit.

The Datadog cost is also driven by usage: more services generating logs and metrics means higher bills. A company running 50 microservices pays more than a company running 5. The cost is transparent and tied directly to infrastructure complexity.

This is why some teams are exploring alternatives: open-source observability tools like Grafana, or cloud-native solutions like AWS CloudWatch. But for most mid-market teams, Datadog's $30,809 annual bill is the price of running distributed systems at scale.

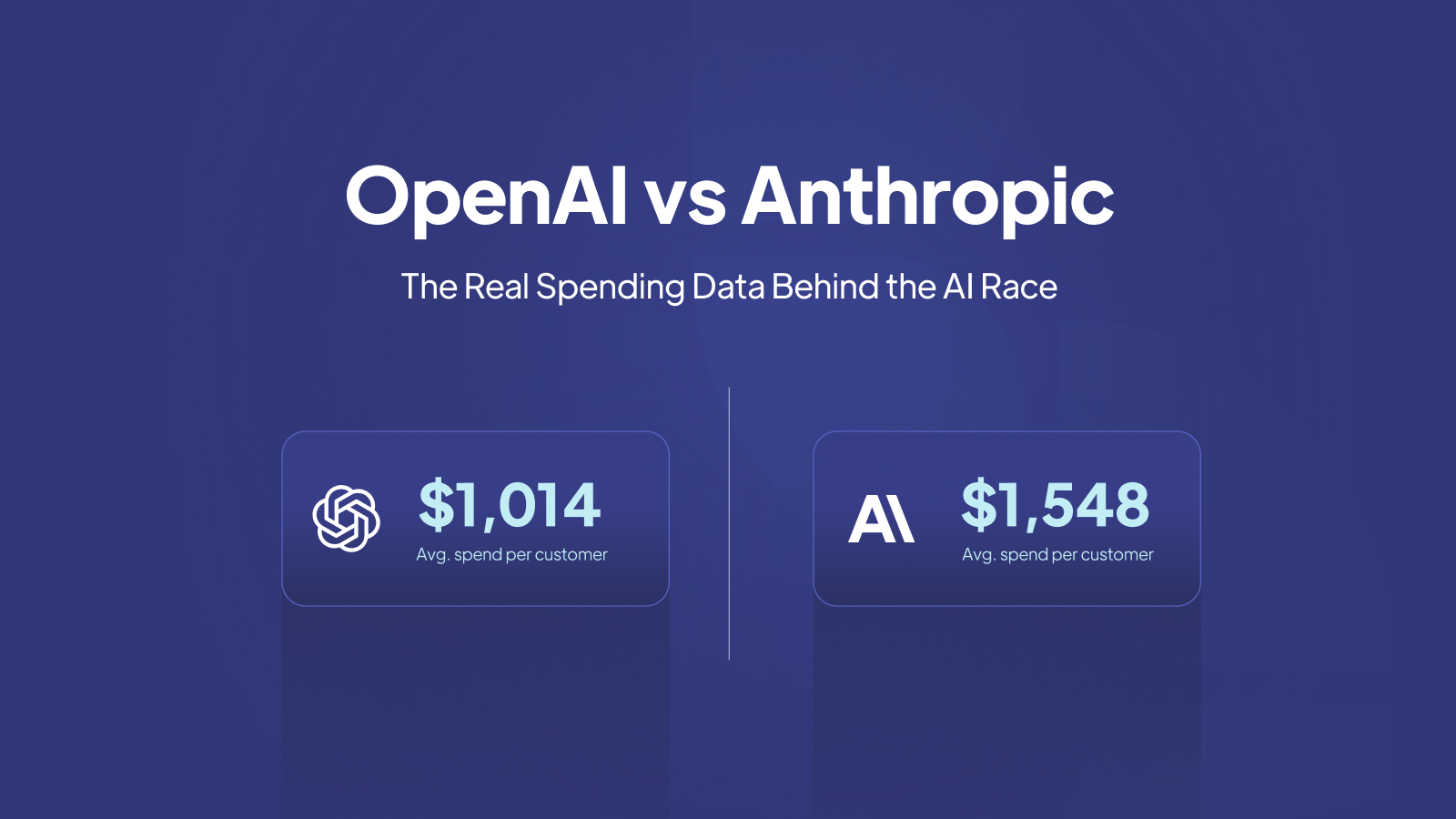

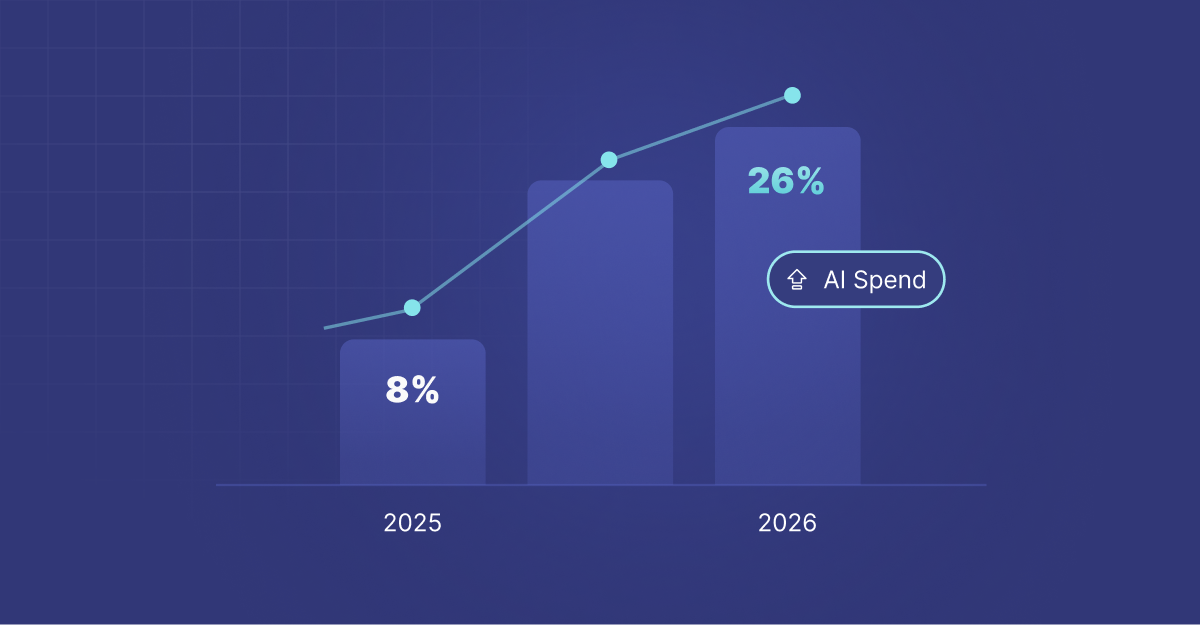

The AI Coding Assistant Migration

Two years ago, most engineering teams didn't have line items for AI coding assistants. Today, many have multiple. Cursor is at $5,857 average spend with 30.5% adoption. Claude is at $3,182 with 38.7% adoption.

These tools are becoming standard productivity layers. Developers aren't choosing between Cursor and Claude for their whole workflow. Many are paying for both, using Claude for strategic thinking and code design, using Cursor for rapid implementation within their IDE.

Notably, ChatGPT (39.2% adoption, $5,938 average) and generic GPT-4 are still widely used, but Cursor and Claude are growing faster. This suggests that specialized tools for code (Cursor) and specialized models (Claude) are outcompeting general-purpose LLMs.

The total AI coding tooling spend per engineering team is likely $400-$600 annually now. Three years ago, it was zero. By 2027, it could be $1,000+. This is new budget that's being created, not displaced.

The Forgotten Middle: CI/CD and Testing

CircleCI is at $5,390 average spend with 7.6% adoption. This is low adoption for a tool that most engineering teams actually need. Why?

GitHub Actions. Most teams are consolidating CI/CD into their version control platform. GitHub Actions is free (within generous limits) and integrated. Why pay CircleCI when Actions is included with GitHub?

This is the consolidation trend in action. Teams don't want to manage separate vendors for version control, CI/CD, and issue tracking. They want one place. GitHub is winning this consolidation battle, despite Atlassian offering a full stack too.

Postman, used for API testing and documentation, is at $5,041 average with 14.1% adoption. This is intentionally low adoption because many teams build testing into their CI/CD pipelines using open-source tools rather than commercial solutions.

The Emerging Engineering Stack

Small Team (5-15 engineers)

GitHub ($7,934), Cursor or Claude ($400-$600), 1Password ($4,955), Slack ($7,555 but shared across company). Total: roughly $21,000 annually, or $1,400 per engineer.

Mid-Market Team (30-100 engineers)

GitHub or Atlassian ($15,000-$25,000), Datadog ($30,809), Sentry ($3,424), JetBrains ($6,160), Cursor or Claude ($1,000-$2,000), 1Password ($4,955). Total: roughly $60,000-$75,000 annually, or $600-$750 per engineer.

Enterprise Team (200+ engineers)

Atlassian ($23,608), Datadog ($30,809), Sentry ($3,424), JetBrains ($6,160), custom AI integrations ($5,000-$10,000), internal tooling ($10,000-$20,000). Total: $80,000-$95,000 annually, or $400-$475 per engineer with economies of scale.

What's Missing: Infrastructure Cost vs Tool Cost

This analysis focuses on SaaS tool spend. But the real engineering budget is infrastructure. Cloud compute (AWS, GCP, Azure) typically dwarfs SaaS tooling. A startup spending $5,000/month on GitHub and Datadog might be spending $30,000/month on AWS.

That said, the SaaS tools are the flywheel that makes engineering possible. Without Datadog, your AWS bill goes up because you're not catching inefficiencies. Without GitHub, you're building internal tools that distract from product. The tool costs are real, but they're optimizing something much larger.

The Trend: Consolidation and Specialization

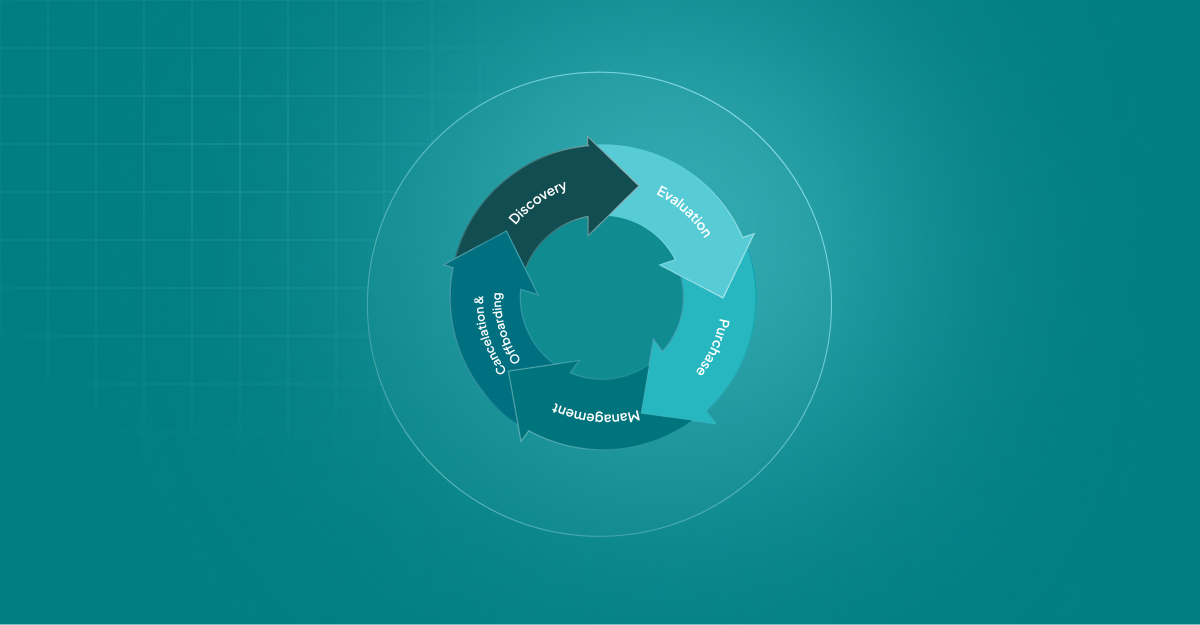

The data shows two opposing forces: consolidation (fewer vendors, more comprehensive platforms) and specialization (new narrow tools like Cursor for AI coding, Langfuse for LLM observability).

Most teams will move toward consolidated platforms (GitHub for source control and CI/CD, Datadog for observability) because integration friction is expensive. But they'll layer in specialized tools for emerging needs (AI coding, LLM monitoring) because general platforms move too slowly.

For CFOs budgeting engineering spend in 2026: expect tool costs to remain 5-10% of total engineering salaries, with the percentage creeping up as AI tools become standard. Build in budget for Cursor or Claude if you haven't already. Evaluate whether you need both Datadog and Sentry, or whether consolidating to one observability platform reduces overhead. And assume that GitHub or Atlassian will remain your platform anchor for at least the next 3-5 years.

This analysis is based on anonymized, aggregated transaction data from Cledara's platform. All figures represent averages, percentages, and ratios. No individual company data is disclosed.